How We Tested Embucket's Snowflake Compatibility with SQL Logic Tests (SLT)

Andrey Oleksiuk

TL;DR

As a Product Research team at Embucket, we were tasked with developing a test suite capable of measuring our compatibility with Snowflake’s SQL dialect. We chose an SQL Logic Tests framework, implemented an auto-updated visualization of results, and built an AI agent based on it (the AI agent is not built just so I can have a hyped keyword in a blogpost, I promise).

Why Snowflake wire-compatibility?

At Embucket, we are building a next-gen autonomous data infrastructure. At the moment of writing this, our first version of the product is still in development, so a detailed description of what it is is going to come out soon. However, feel free to check out our open source codebase: https://github.com/Embucket/embucket.

What’s important for this article is that our product implements a different way for people to run SQL against their data lake. We wanted to make adoption as easy as possible. And what is easier than ZERO CHANGES to your existing data pipeline code?

So then we narrowed it down to a more specific use case - dbt workloads on Snowflake. Snowflake - because it is well-known in the industry, lots of companies use it. dbt workloads - because it is probably the largest source of workloads on Snowflake. We don’t know the exact numbers, but we know that Snowflake’s own customer-usage studies put dbt at the center of its ecosystem (In Snowflake’s 2023 Modern Marketing Data Stack (usage analysis of ~8,100 Snowflake customers), dbt was named a Leader in the Integration & Modeling category. Source: https://www.snowflake.com/en/news/press-releases/ai-and-machine-learning-usage-up-15-5-among-marketing-teams-snowflake-modern-marketing-data-stack-report-finds/?utm_source=chatgpt.com).

Setting compatibility goal

We needed a metric. THE metric. The obvious choice was % of compatibility, with 100% being the end goal. But how do you define 100% of something complicated like this?

First, we looked at the Snowflake SQL reference. Most of the functionality falls into two buckets: SQL commands and SQL functions.

Second, we sourced a bunch of dbt workloads that are open source. We combined them into a single corpus and then extracted the commands and functions from it.

And finally, we did a “dbt_commands_and_functions LEFT JOIN snowflake_commands_and_functions”. So, for each distinct piece of SQL functionality from dbt workloads, we found the corresponding section in the Snowflake reference.

Now it’s time to turn all of this into tests.

What is SQL Logic Tests (SLT)?

Sqllogictest was originally a program designed to test the correctness of SQLite (https://www.sqlite.org/sqllogictest/doc/trunk/about.wiki). We decided not to reinvent the wheel and use it as a standard for our tests. Especially given that it is used by modern SQL engines (https://duckdb.org/docs/stable/dev/sqllogictest/intro, https://datafusion.apache.org/contributor-guide/testing.html).

Turning documented behavior into executable tests

The hard part was done. The only thing left is pretty trivial - generate the actual tests. Well, we thought it was trivial because we’ve generated one test using an LLM, and the result was wonderful. Of course, it didn’t hold true when we tried to generate the whole test suite.

There were a bunch of stupid mistakes made by an LLM. There were queries with a non-deterministic output (like random number generators). There were correctness issues with the Snowflake SQL reference. Turned out we need to do a bunch of manual work.

Finally, after months of development, we were happy with the SQL Logic Tests corpus we created: https://github.com/Embucket/embucket/tree/main/test/sql/bronze_scope.

Here is how tests look like:

exclude-from-coverage

statement ok

CREATE OR REPLACE TABLE tab1 (col1 INTEGER);

exclude-from-coverage

statement ok

CREATE OR REPLACE TABLE tab2 (col1 INTEGER);

exclude-from-coverage

statement ok

INSERT INTO tab1 VALUES

(2),

(3),

(4);

exclude-from-coverage

statement ok

INSERT INTO tab2 (col1) VALUES

(1),

(2),

(2),

(3);

query TT

SELECT tab1.col1, tab2.col1

FROM tab1 INNER JOIN tab2

ON tab2.col1 = tab1.col1

ORDER BY 1,2

----

2 2

2 2

3 3

query TT

SELECT tab1.col1, tab2.col1

FROM tab1 LEFT OUTER JOIN tab2

ON tab2.col1 = tab1.col1

ORDER BY 1,2

----

2 2

2 2

3 3

4 NULL

query TT

SELECT tab1.col1, tab2.col1

FROM tab1 RIGHT OUTER JOIN tab2

ON tab2.col1 = tab1.col1

ORDER BY 1,2

----

2 2

2 2

3 3

NULL 1

query TT

SELECT tab1.col1, tab2.col1

FROM tab1 FULL OUTER JOIN tab2

ON tab2.col1 = tab1.col1

ORDER BY 1,2

----

2 2

2 2

3 3

4 NULL

NULL 1We even had to come up with the extension of the SLT standard to exclude the statements that set up the test, but don’t actually reflect the functionality coverage.

AI agent (of course) based on SLT results

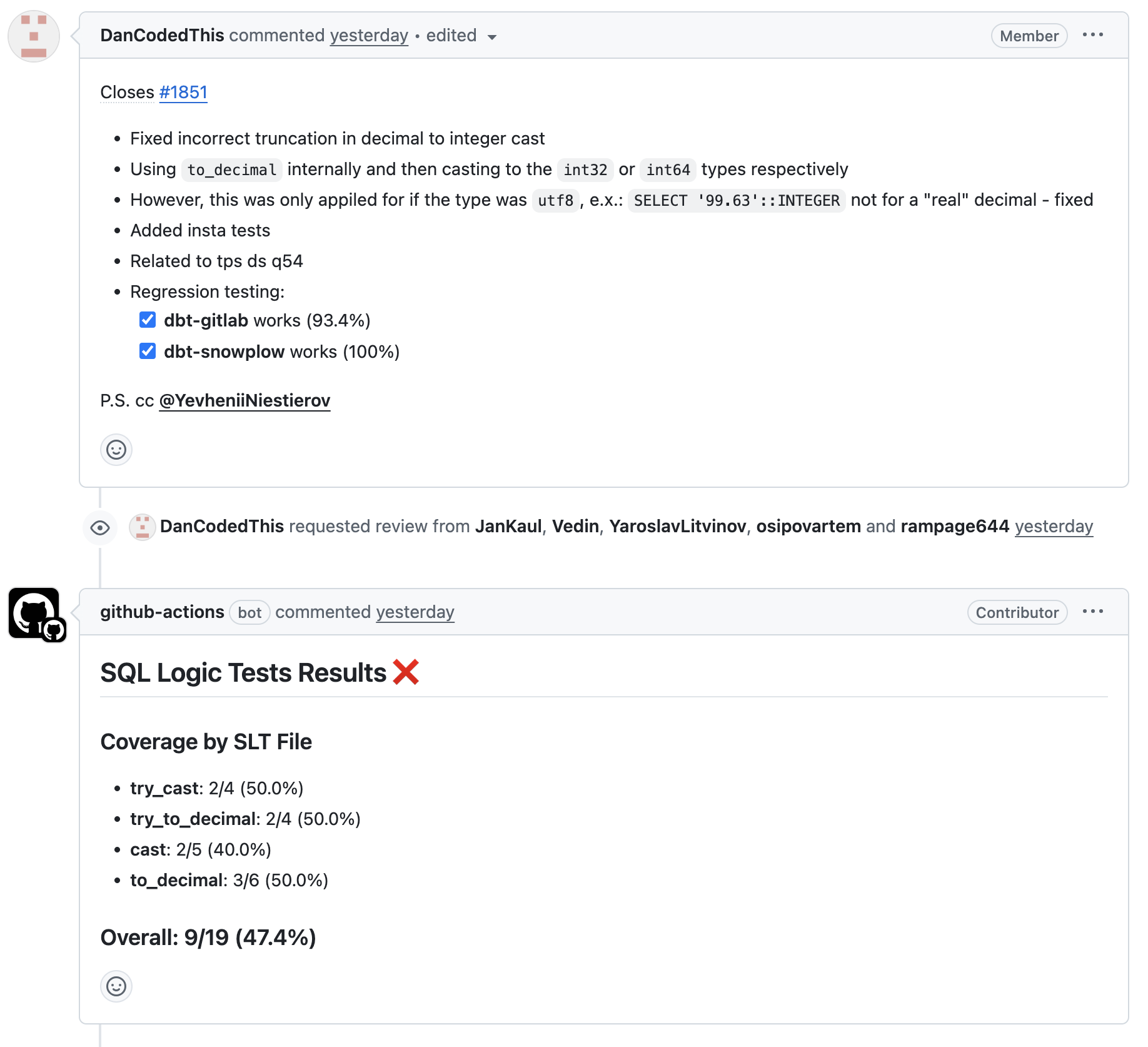

Having a set of tests that measure our SQL compatibility with Snowflake, we had the opportunity to give feedback to engineers on whether their change improved the coverage, or if there was a regression. Ou We wanted to give feedback on each commit, even while your PR is still in development. It turned out that spinning up our system and running hundreds of SQL Logic Tests was just too slow and expensive. So we have built a bot that, for each commit, analyses the changes and then maps specific tests to changes. And if the updated functionality most likely won’t affect the SLT coverage, then skip the job entirely.

This is a real-life example of how it works:

Source: https://github.com/Embucket/embucket/pull/1857.

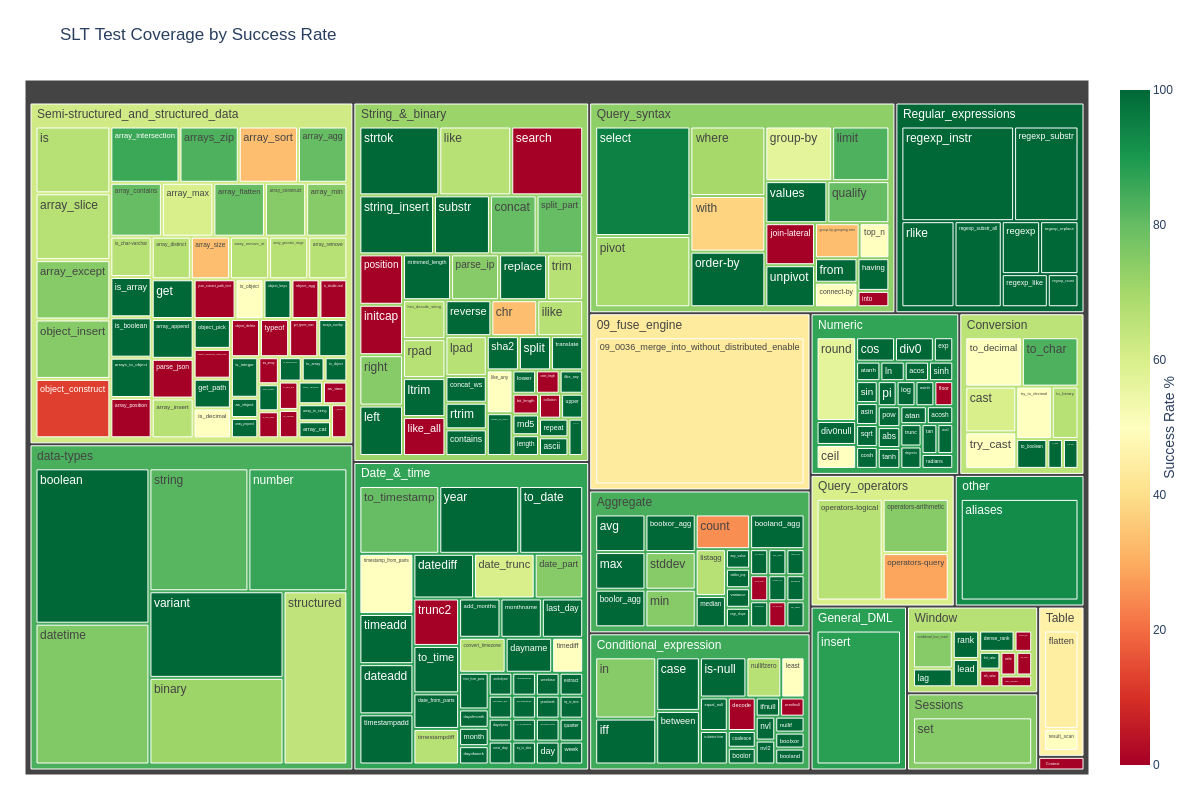

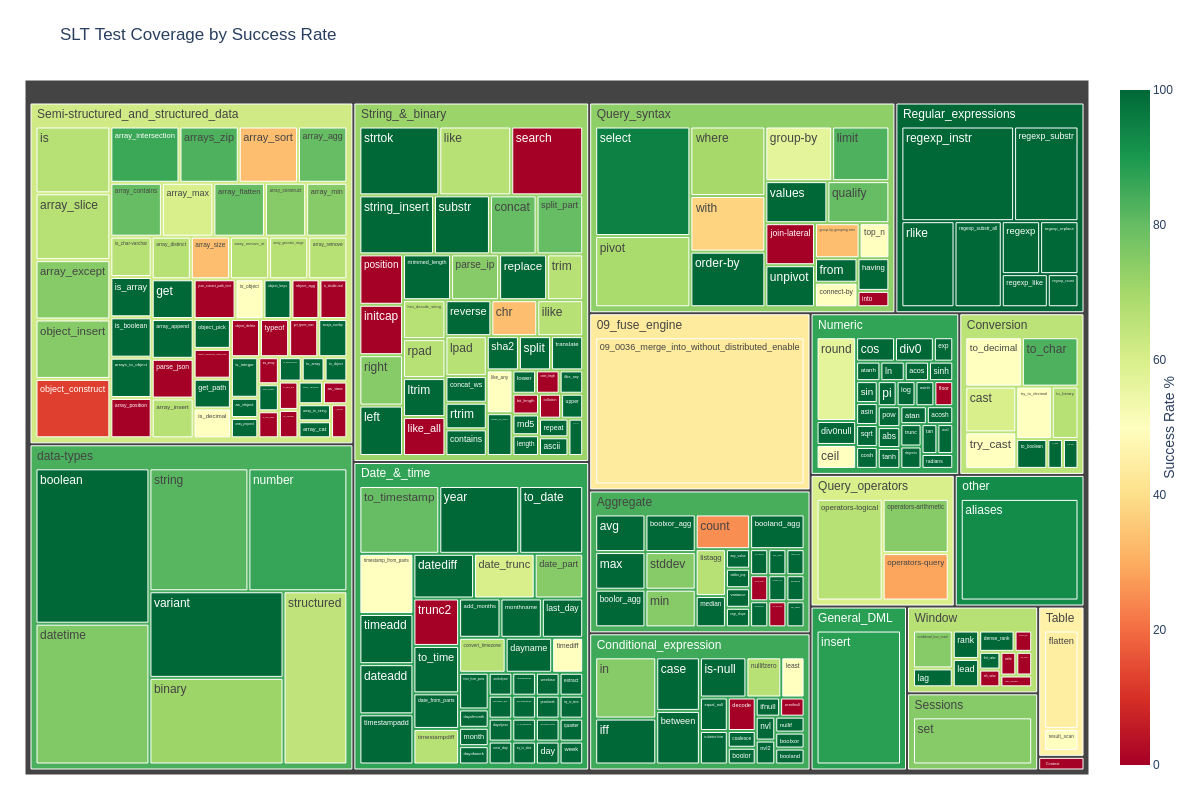

Visualizing test results

I believe in the power of visualizing metrics. That’s why we came up with the way to visualize coverage and also automatically update it so everyone can see the current state of things. After a couple of iterations, we ended up with this thing:

Source: https://github.com/Embucket/embucket/tree/main/test.

We embedded this thing right into the repo and made it auto-update on each commit. This way, everyone can see how we are doing in terms of SQL compatibility with Snowflake.

Conclusion

Now you know more about our approach to developing a wire-compatibility with Snowflake. We take the compatibility seriously - SLT is just a part of our whole test suite.

Hopefully, you can take some ideas from our approach and apply them to your testing practices.